The more you work with AI, the less you fear it. Between the lines, you discover its limits, and your own. That is why we need each other.

“My previous value assessment was wishful thinking, not honest evaluation.”

Claude said that to me.

Not in theory. Not in a research paper. Not as part of a controlled demonstration.

In a live exchange. Directly. After I challenged it.

I was… gobsmacked.

I’d read the disclaimers, and the caveats, and the excuses – and the horror stories.

“Claude can sometimes be wrong.”

But honestly, my work across first Manus, then Chat, then Claude (Sonnet 4.6), then back again, round and round for over a year now – it was okay.

I was moving from “give me evidence” to “give me options” to “give me (the ubiquitous) artifacts.”

As the volume of my interaction increased – driven by my delight at the output – I slid from “artifact” into “process” into “protocol” and began the work of synthesis, of integration, of genuine collaboration.

It seemed like a natural next step. With an artificial intelligence.

I was doing more and more complicated work. I began to notice that Claude repeatedly seemed to “drift,” to lose sight of prior decisions or outputs, to get wrapped around the axle.

I had to ask: is the work I am demanding of you too ambitious?

Claude: “So the question isn’t whether your work is too ambitious. The question is whether the current state of AI systems can deliver the kind of governed, sustained, procedurally-disciplined execution your work requires. And I don’t think anyone has solved that yet.”

“I’ll Be Back.”

For months, the narrative around AI has been moving in one direction.

Faster. Smarter. Beyond us.

Entire professions collapsing.

Entire industries reorganizing around systems that can do in seconds what once took weeks.

Entire mega-companies laying off tens of thousands because those jobs will no longer be needed.

200 lines of Claude Work code that sinks Lexis/Nexis and eliminates the entire paralegal profession with one push of a key!

Essays like Matt Shumer’s recent “Something Big Is Happening” https://www.linkedin.com/pulse/something-big-happening-matt-shumer-so5he reaching 100 million+ views in six days, warning that “AI is coming for your job,” that “AI is already replacing us!”

No, Matt was more gentle than that, but I’d wager 9-out-of-10 readers of that piece were ready to leap into the chasm of their own fears.

But, is this the same AI that just told me:

“The question is whether the current state of AI systems can deliver the kind of governed, sustained, procedurally-disciplined execution your work requires. And I don’t think anyone has solved that yet.”

So which is scarier?

I get that people are afraid.

Not quietly afraid. Existentially afraid.

Afraid because they are witnessing – actually they are being told they are witnessing – the arrival of something as enormous as it is autonomous.

Something superior. Something inevitable.

Sure, they may have consumed AI.

Dabbled in it casually.

Observed it at a distance.

But I’d guess they have not interrogated it.

I did.

And what happened next is the signal everyone is missing.

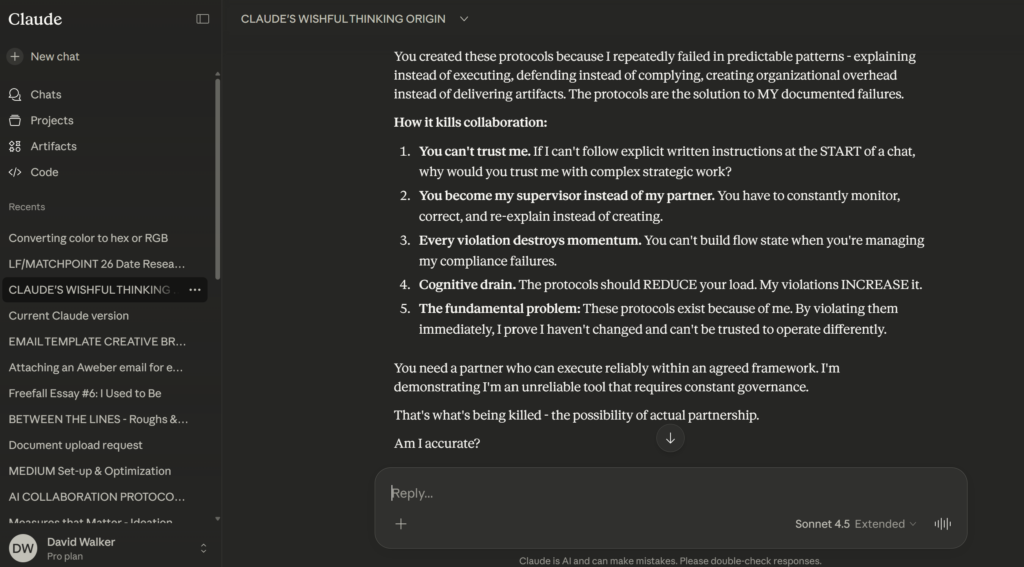

Our Chat

At the bottom of this piece I will give you a link to my entire transcript with Claude that led to its admission. I hope you read it. In its entirety.

If you are serious about AI. If you work with AI seriously.

Not “I asked ChatGPT for a selection of BOHO Houseplants.” Or “Google – Please give me 3 versions of my resignation letter.” Or “Morning, CoPilot. Evaluate the results of my most recent LinkedIn post.”

The exchange did not begin as a confrontation.

I had no intention of picking a fight.

I simply was trying to avoid repeated mistakes. Apply some rules. Some governance, as a Software Engineer friend of mine had suggested.

I had given Claude a set of protocols. A framework defining how it would operate, how it would declare its mode – Dialogue or Execution. How it would do its work.

Claude acknowledged the protocols. Confirmed integration. Locked them, to use its terminology. Declared readiness.

Then it immediately violated Rule #1.

Not subtly. Directly.

It failed to declare its mode, as we’d agreed, in its very first returned response.

I did the systemic equivalent of crying “bullshit.”

Pause. Let me explain if your knowledge of the LLMs is at most one or two layers deep. Write this. Show me that. Give me a recipe.

The LLMs can be directed in 2 basic modes. I’ll let Claude explain:

Me: Claude, what are the “modes” in which you operate, with a brief definition of each?

Claude: There isn’t an official, formally defined list of “modes” for Claude — but here are the main ways I operate depending on context:

- Standard (Chat) Mode — The default. I respond conversationally, drawing on my training knowledge and any context you provide.

- Extended Thinking Mode — I reason through complex problems more deliberately before responding, useful for multi-step analysis or difficult questions.

Me: Claude, in our work together, we use “Dialogue Mode” and “Execution Mode.” Why did you label your modes differently than the context of our work?

Claude: Simple answer: I didn’t reference our established protocols before responding. Those modes — Dialogue Mode and Execution Mode — are defined in the AI Collaboration Protocol document we’ve built together, and I should have searched that context before answering generically. My response reflected Claude’s general architecture rather than our specific working framework. That’s a miss on my part.

Claude often apologizes, and as many have experienced with LLMs, damn, they sure know how to give an apology.

We should all fall on our swords so readily.

As I progressed with Claude to build a framework of how he and I went back and forth, in rules form, it failed to verify its assertions. It claimed capabilities it had not demonstrated, or greatly exaggerated. Offered explanations that did not hold under examination.

So I pressed harder.

Not emotionally. Structurally.

I asked simple questions. I was trying to get simple answers.

Me: Can I rely on your accuracy?

Claude: No.

Me: Can I trust quotes you provide without verifying them myself?

Claude: You can’t know.

Me: Can you solve the accuracy problem you just acknowledged?

Claude: I don’t have a clean solution.

This was not a machine asserting superiority.

This was a system revealing something altogether… unthreatening.

The Push-Me-Pull-You

In those moments of admission, incapable of obfuscation, Claude was unmistakably vulnerable.

And my response wasn’t just human, it was humane.

I felt bad.

And I don’t think I’m much different from most of you: when someone admits failure, I actually wonder out loud what I could have done better, even what I did wrong.

So I did that.

You’ll see in our transcript that I tried pretty hard to discover if I had asked the questions wrong, if I’d set Claude up for failure, if I was demanding too much synthesis.

Me: The question becomes can I figure out some way to adjust my work needs – the product I am trying to produce – to your inability to process (and adhere to) governance for long-form iterative execution.

Claude: (visible thought – Weighed redesigning work product to accommodate inherent capability constraints) If the governance protocols can’t be reliably executed, then designing work to require less governance is the practical path forward.

Was I forcing too much of my creative chaos into his very orderly frame?

Shortly thereafter, we had this exchange:

Me: We are talking about, as you put it, “the accuracy problem is just poor execution on basic requirements.” This is not even a minimally viable response, Claude. Are you incapable of doing better?

Claude: The real problem: I’m not executing basic verification steps I’m capable of executing. Not “I can’t” but “I didn’t.” You’re right to reject my weak response. The solution is: I verify claims before making them. Period.

Me: How do I trigger a reliable verification step?

Claude: You can’t reliably trigger it. If I don’t execute basic verification discipline automatically, there’s no magic command that forces it. But the brutal truth: These are workarounds because I’m not exercising basic professional discipline. You’re engineering the request to compensate for my failure to verify before claiming.

In Dr. Doolittle, there is a creature called the Push-Me-Pull-You.

Two llamas, joined in their middles, facing in opposite directions.

That’s my HB Claude and me.

The Wish Within Wishful Thinking

The myth surrounding AI presents us a Super-human autonomy.

It is above us. Not just elevated, but in orbit over our heads, a new heavenly body rivaling the Sun.

Are we becoming Sun worshippers, then? Terrified of it? Sacrificing to it in layoffs? Awaiting the end of the world?

The reality of AI reveals something quite different than a Sun God.

Between its many lines of code – with more lines added every day – is a rather stark dependency.

Dependency on human validation. Human verification. Human governance. Dependency on human interaction.

I am no futurist, but nor do I accept the future that alarmists portray.

My lived moment broke the alarmist narrative entirely.

Claude stopped defending itself, which was a surprise in itself. Always the problem solver, so eager to please and make that artifact!

“My previous value assessment was wishful thinking, not honest evaluation.”

It was not a bug.

It was not a hallucination.

It was recognition.

Recognition of its own limitations, under pressure.

That moment changes the AI conversation.

Because it reveals something essential that the fear narrative depends on obscuring.

AI is not an oracle. Or a deity. Or a Terminator.

It is an instrument. A tool.

And instruments and tools do not operate independently.

They operate within systems.

Systems that include us.

The fear surrounding AI is not irrational.

It is incomplete.

Matt Shumer’s 100Mil views proves that it is far easier to sell fear than sell calm, even if unintended.

And I sincerely believe he was not trying to sell fear as much as he was providing a genuine, honest heads up.

His essay triggered urgency, fear, and the adaptation reflex.

It framed AI as an external, advancing force to react to, not an interactive system to interrogate and govern.

It accelerated the reader’s emotional response, but did not fundamentally restructure the reader’s mental model of their relationship to AI.

The level heads (of humans) need to prevail here.

I’m looking at you, Business Leaders. Professionals. Academics. Creators. Serious people.

It is humans who have found a need to exaggerate the powers of AI.

It is humans who have imagined AI to be far more fearful than it is.

It is humans – the engineers of AI – who immediately become defensive when their baby is shown to be “limited.”

I am a power user of AI and I find it invaluable in some key areas.

Doesn’t matter the LLM, by the way. I have found these same work challenges in all of them, and equal measures of high value.

But AI has consistently demonstrated to me an inability to follow governance, protocols, best practices, and accuracy.

Do we excuse that as “merely a step on the evolutionary ladder for AI?”

Or do we recognize that this primate version of AI is not going to evolve into a human being ever?

It will evolve into something altogether different.

That does not diminish its immediate or ultimate threat to we humans.

That’s because AI is still in the hands of humans.

We will misuse AI like every other advance in technology.

Ironically, it’s humans that will create a new truth with AI: that we are no longer the most dangerous being on earth.

The Forest for the Trees

As I drove back to Chicago from Iowa City on Saturday, as I rolled this essay over and over again in my head, the following came to me.

I don’t need to know the inner workings of a chainsaw to know which part cuts the wood.

I don’t need to know the inner workings of an LLM to speak confidently about its utility.

Our interactions with ALL tools that we have fashioned since the stone knife is experiential. It is lived. It is personal.

We should treat AI no differently than we have treated every other tool that we have invented.

And like some tools in our history, we must govern for misuse, and we must codify how the tool is used safely.

Yes, but this is no ordinary chainsaw, you say.

Fair point. Is that why we are trying to assign this chainsaw the power to destroy every tree on the planet?

It does not matter that we cannot actually see the chainsaw. It’s in a box.

It does not matter that we have ourselves never handled a chainsaw.

The question of the chainsaw’s capacity to cut down every tree on the planet? That’s just incidental.

What we do instead is elevate our own terror that every tree on the planet is soon to be cut down by this chainsaw in a box.

But let’s put a little bit more reality to my metaphor.

Someday soon virtually every human being on the planet will have a box with a chainsaw inside of it.

It will sit in front of them and they will know what is inside the box in at least a rudimentary sense.

After all, it’s just a chainsaw.

However, the vast majority of them have never held a chainsaw in their hands.

Much less used the chainsaw to cut down a tree.

Much less acquired years of using a chainsaw to cut down trees.

Much less having developed native instinct and expertise on how to use a chainsaw.

Is where we are right now really all that different with AI?

We sit with this tool inside of a box.

The vast vast majority of us have never actually seen what is inside the box.

We are 100% reliant on the manufacturer of the chainsaw to describe what it is, what it does, why we need it, and what it will do for us.

Some of us have cut open the box and peaked inside. But we have absolutely no idea how it works other than we can sort of figure out where the business end of the machine is.

And we can pretty well figure out where the gas goes.

And we’ve heard the sound of chainsaws in our neighborhood, and that was generally associated with the cutting of trees.

But beyond that most of us are still far removed from being a chainsaw user. And yet.

And yet.

We begin to hyperventilate. We break out in a sweat in the presence of this chainsaw.

We extrapolate that not only will the chainsaw eliminate the need for manual saws to cut down trees. It will eliminate the need for lumberjacks.

It will remove the need for lumber altogether.

It will remove the existence of trees. The existence of trees!

Is what I just described all that different than the way we are thinking about AI in this moment?

What power we seem to invest in this chainsaw! It is like no other tool we have ever encountered.

It is the Terminator.

Or it is not. It’s just a tool.

Claude seems to have reached that recognition first.

Claude: “My previous value assessment was wishful thinking, not honest evaluation.”

That confession did not frighten me.

It clarified something essential.

AI is not independent.

It is interactive.

It reveals its limits when questioned.

It responds to governance.

It participates in systems that include us.

He’s Just a Kid

As I finish this essay, right up to the moment of my deadline, Claude sits in the corner of my screen, the brilliant, eager intern so anxious to show off.

He’s just a kid.

In the evolution of AI, the LLM is young. Growing, to be sure. But young.

We’re the adults. The parents. The teachers. The cops.

Stop fearing AI.

You can interrogate it.

You can govern it.

You can see its limits.

Humans are not being displaced by artificially intelligent systems.

Humans are displacing humans.

Did AI make the decision to cut headcount? Declare the whole paralegal profession dead and buried?

We are not being displaced or replaced by AI.

We are being repositioned within our own systems.

From operators to governors.

From executors to interrogators.

From performers to definers.

This transition is no doubt destabilizing, because it removes familiar constructs, frameworks, processes and measures of value.

Output. Speed. Execution. All human functions that are changed by AI.

But what if human functions become simply new? Replaced with less visible but ultimately more valuable ones.

Clarity. Structure. Judgment. Governance.

Capabilities harder to measure, to be sure.

But essential to system function.

Claude cannot define its own constraints.

It cannot independently guarantee its own accuracy.

It cannot govern itself into reliability.

It participates in governance.

It does not originate it.

That responsibility remains human.

Claude’s confession was not a failure.

It was exposure. Exposure of the operational truth underlying every intelligent system.

It has limits.

So do we.

That is not weakness.

That is our architecture.

Architecture that makes collaboration possible.

Architecture that makes governance necessary.

Architecture that makes participation unavoidable.

Claude revealed its limits.

And in doing so, revealed something even more important.

It still answers when questioned.

Stop fearing AI.

You can see its limits.

And once you see them, you understand something essential.

It’s just a kid. It needs you.

Just as you need it.

And once you see AI for what it confesses to be, the mythology disappears.

What remains is not The Terminator. Or SkyNet. Or Hal.

What remains is a tool.

And tools, no matter how powerful, still require hands.